Determining Fault in Self-Driving Car Accidents in California

Self-driving cars, equipped with advanced artificial intelligence and sensor technologies, revolutionize the way we commute. It promises increased safety, efficiency, and convenience. But as the development of autonomous technology changed how we travel every day, it also presented several previously unheard-of legal issues including determining self driving car accident fault in California.

Self-driving cars, autonomous vehicles, or driverless cars are automobiles equipped with advanced technologies that allow them to operate and navigate without human intervention. These vehicles use a combination of sensors, cameras, radar, lidar (light detection and ranging), GPS, and sophisticated software algorithms to perceive their environment, make decisions, and control the vehicle’s movements.

Key Summary:

- Self-driving cars, equipped with advanced AI and sensors, revolutionize commuting, promising increased safety, efficiency, and convenience.

- Autonomous vehicles operate without direct human intervention, using sensors, cameras, radar, lidar, GPS, and sophisticated software.

- The Society of Automotive Engineers (SAE) categorizes self-driving cars into Levels 0 to 5 based on automation levels.

- Self-driving car accidents can occur due to technical malfunctions, sensor limitations, misinterpretation of the environment, transition issues, human errors, and more.

- Responsibility in self-driving car accidents may lie with the vehicle manufacturer, software developer, sensor suppliers, human driver, fleet operator, regulatory authorities, infrastructure providers, and other road users.

- Factors affecting liability include data recording, real-time decision-making, handover issues, user training, cybersecurity, V2X communication, adherence to regulations, insurance frameworks, public perception, emergency response systems, and weather conditions.

- Proving negligence in self-driving car accidents involves establishing a duty of care, breach of duty, causation, proximate cause, and damages.

What are Self-Driving Cars?

Modern systems and technologies enable self-driving automobiles to function and navigate without the need for direct human intervention. These vehicles sense their environment, process information, and make judgments to regulate their motions using a variety of sensors, cameras, radar, lidar, GPS, and advanced software.

The primary goal of self-driving cars is to achieve a high level of automation in driving tasks, reducing or eliminating the need for human intervention. The development of self-driving cars is driven by the potential to improve road safety, increase transportation efficiency, and provide mobility solutions for individuals who may be unable or unwilling to drive.

Levels of Self-Driving Cars

The levels of self-driving cars, as defined by the Society of Automotive Engineers (SAE), are categorized on a scale from Level 0 to Level 5, representing different levels of automation and human involvement. Here’s a brief overview of each level:

- Level 0 (No Automation): At this level, there is no automation involved. The human driver is responsible for all aspects of driving, including control of the vehicle and monitoring the environment.

- Level 1 (Driver Assistance): In Level 1 automation, there is a single automated system, such as adaptive cruise control or lane-keeping assistance, that can assist the driver. However, the human driver must remain engaged and monitor the vehicle’s environment.

- Level 2 (Partial Automation): Level 2 involves two or more automated systems working together, such as adaptive cruise control and lane-keeping assistance. The vehicle can handle certain driving tasks, but the driver is still required to supervise and intervene if necessary.

- Level 3 (Conditional Automation): At Level 3, the vehicle can perform most driving tasks under specific conditions, such as highway driving. The driver can disengage from active control but must be ready to take over if the system requests.

- Level 4 (High Automation): Level 4 vehicles are capable of fully autonomous driving in specific scenarios or environments, such as within a defined urban area or on dedicated lanes. In these situations, the vehicle operates without human intervention, but it may require a driver in certain conditions.

- Level 5 (Full Automation): At Level 5, vehicles are fully autonomous and capable of handling all driving tasks in all conditions without any human involvement. These vehicles do not have a steering wheel or pedals, as there is no need for human control.

The development and deployment of higher-level automation depend on advancements in technology, regulatory approvals, and addressing safety and ethical considerations.

Self-Driving Car Accidents

Self-driving car accidents refer to collisions or incidents involving vehicles equipped with autonomous or self-driving technology. These incidents can occur for various reasons, and understanding the common causes is essential for improving the safety of autonomous vehicles. Some common reasons for self-driving car accidents include:

Technical Malfunctions

Autonomous vehicles rely on complex systems, including sensors, cameras, radar, lidar, and sophisticated software. Technical malfunctions in any of these components can lead to misinterpretation of the environment, potentially resulting in accidents.

Sensor Limitations

The sensors used in self-driving cars may have limitations, especially in adverse weather conditions (heavy rain, snow, fog) or low-light situations. Poor sensor performance can affect the vehicle’s ability to accurately perceive its surroundings.

Misinterpretation of the Environment

Autonomous systems may struggle to correctly interpret complex or dynamic traffic scenarios, road signs, and the behavior of other road users. This can lead to errors in decision-making and potentially result in accidents.

Transition from Autonomous to Manual Mode

Some self-driving systems require human drivers to take control in certain situations. If the transition from autonomous to manual mode is not seamless, or if the driver is not prepared to take over promptly, it can lead to accidents.

Human Errors

In cases where autonomous vehicles operate in mixed traffic conditions (with both self-driving and human-driven vehicles), accidents may occur due to errors made by human drivers. Autonomous vehicles might struggle to predict and respond to unpredictable behavior from human drivers.

Insufficient Testing and Validation

Inadequate testing and validation of autonomous systems can contribute to accidents. Comprehensive testing in diverse and complex environments is crucial to identify and address potential issues.

Regulatory Challenges

The lack of clear regulatory frameworks or standardized testing procedures for self-driving cars may contribute to accidents. A uniform set of regulations can help ensure that autonomous vehicles meet specific safety standards.

Cybersecurity Threats

As self-driving cars rely on interconnected systems and communication networks, cybersecurity threats pose a risk. Unauthorized access or malicious attacks on the vehicle’s software could lead to accidents or compromised safety.

Road Infrastructure Challenges

Inconsistencies in road markings, signs, and infrastructure can pose challenges for self-driving cars. The technology may struggle to navigate through construction zones, poorly marked roads, or areas with inadequate infrastructure support.

Who Can Be Responsible for Self-Driving Car Accidents?

Determining responsibility in self-driving car accidents can be complex and depends on various factors. The responsibility may lie with different entities involved in the development, deployment, and operation of autonomous vehicles. Some key stakeholders who might be considered responsible include:

Vehicle Manufacturer

The manufacturer of the self-driving car could be held responsible if the accident is attributed to a design flaw, manufacturing defect, or malfunction in the vehicle’s hardware or software.

Software Developer/Provider

Companies responsible for developing autonomous driving software and algorithms may be held accountable if the accident is traced back to a software error or failure.

Sensor and Hardware Suppliers

Entities providing the sensors, cameras, lidar, radar, and other hardware components used in autonomous vehicles could be implicated if their products are found to be faulty or inadequately designed.

Human Driver (if applicable)

In cases where the self-driving car operates with a human driver who is expected to take control in certain situations, the human driver may bear responsibility if they fail to intervene when necessary.

Fleet Operator/Owner

If the autonomous vehicle is part of a fleet and operated by a company or individual, the operator or owner might be held responsible for maintenance issues, ensuring the vehicle is in proper working condition, and adherence to safety protocols.

Regulatory Authorities

Regulatory bodies responsible for overseeing the deployment of autonomous vehicles may be questioned about the approval process, regulations, and standards in place. Inadequate regulation or oversight could contribute to accidents.

Infrastructure Providers

Entities responsible for road infrastructure might be considered if issues such as poor road markings, inadequate signage, or inconsistent infrastructure contribute to accidents involving self-driving cars.

Other Road Users

Human drivers, pedestrians, and cyclists sharing the road with self-driving cars may also play a role in accidents. Reckless or unpredictable behavior by other road users could contribute to collisions.

Responsibility often involves a combination of factors, and investigations into self-driving car accidents require a thorough examination of the circumstances leading to the incident. Legal frameworks and regulations may also influence the assignment of responsibility. As the technology and regulatory landscape evolve, there will likely be further developments in determining liability in self-driving car accidents.

Other Factors That May Affect Liability in Self-Driving Car Accidents

In addition to the primary stakeholders involved in the development and operation of self-driving cars, several other factors can influence liability in autonomous vehicle accidents. These factors may complicate the determination of responsibility or contribute to a shared responsibility scenario:

Data Recording and Analysis

The availability and accuracy of data recorded by the self-driving car’s sensors and systems can significantly impact liability. Comprehensive data analysis may help reconstruct the events leading up to the accident and identify contributing factors.

Real-time Decision-Making

The ability of the self-driving system to make real-time decisions based on sensor inputs and environmental conditions is critical. If the system fails to respond appropriately to a dynamic situation, questions of liability may arise.

Handover Issues (Level 3 Automation)

In vehicles with Level 3 automation, where drivers are expected to take control in certain situations, the effectiveness of the handover process between automated and manual modes can affect liability. Issues with transition procedures or delays in driver takeover may be considered.

User Training and Awareness

Liability may be influenced by the level of training and awareness provided to users or operators of autonomous vehicles. If users are not adequately informed about the capabilities and limitations of the technology, it could impact the assignment of responsibility.

Cybersecurity Measures

The effectiveness of cybersecurity measures to protect self-driving cars from unauthorized access or malicious attacks is crucial. If a cybersecurity breach contributes to an accident, questions of liability may extend to issues of system security.

Vehicle-to-Everything (V2X) Communication

V2X communication allows vehicles to communicate with each other and with infrastructure. The effectiveness of this communication in enhancing safety and preventing accidents may influence liability considerations.

Adherence to Regulations and Standards

Compliance with existing regulations and industry standards is an essential factor. Failure to adhere to established guidelines may impact liability for accidents involving self-driving cars.

Insurance Framework

The structure of insurance policies and how they address accidents involving self-driving cars can influence liability. Insurance companies may need to adapt their policies to account for the unique challenges posed by autonomous vehicles.

Public Perception and Trust

Public perception and trust in self-driving technology can influence legal proceedings and public opinion. Negative perceptions may impact how liability is assigned and how legal and regulatory frameworks evolve.

Emergency Response Systems

The effectiveness of emergency response systems, both within the vehicle and external emergency services, can impact the outcomes of accidents. Prompt and appropriate responses may mitigate liability concerns.

Weather and Environmental Conditions

Adverse weather conditions or challenging environmental factors may pose challenges for self-driving systems. Liability considerations may take into account the system’s ability to operate effectively in various conditions.

Proving Negligence in Driverless Car Accidents

Negligence in self-driving car accidents is a legal concept that involves the failure to exercise reasonable care, resulting in harm to others. While the application of negligence principles to self-driving cars is a developing area of law, the traditional elements of negligence may still be relevant. These elements typically include:

Duty of Care

The first element of negligence is establishing that the party owed a duty of care to others. In the context of self-driving cars, this could involve demonstrating that the manufacturer, developer, or operator of the autonomous system owed a duty to design, manufacture, or operate the vehicle with reasonable care to prevent harm.

Breach of Duty

A plaintiff must show that the defendant breached the duty of care. In the case of self-driving cars, this could involve demonstrating a design flaw, manufacturing defect, inadequate testing, or failure to implement necessary safety features.

Causation

Causation requires establishing a direct link between the defendant’s breach of duty and the harm suffered by the plaintiff. In self-driving car accidents, may involve proving that a specific failure in the autonomous system directly led to the accident.

Proximate cause, also known as legal cause, involves demonstrating that the harm suffered was a foreseeable consequence of the defendant’s breach. This might involve showing that the consequences of a system failure were reasonably foreseeable.

Damages

The plaintiff must show that they suffered actual damages, whether they are physical injuries, property damage, or other losses. Without tangible harm, a negligence claim may not be viable.

Your Legal Support in Establishing Self-Driving Car Accident Fault California

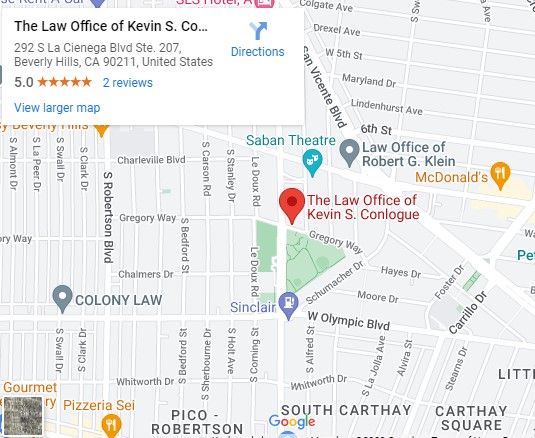

After an accident involving a self-driving car in California, navigating the complexities of legal, insurance, and technological landscapes can be challenging. Your safety and well-being are of utmost importance, and taking the right steps can help protect your interests. If you find yourself in such a situation, consider seeking professional advice from our personal injury attorney at Conlogue Law LLP.

Conlogue Law LLP brings a wealth of experience to cases involving self-driving car accidents. Our team understands the nuances of this evolving field, and we can provide guidance tailored to your specific circumstances. Our commitment to client advocacy and a comprehensive understanding of autonomous vehicle technology make us a valuable resource in navigating driverless car crashes.

Whether you need legal representation in a self-driving car accident and other personal injury cases, require a trial attorney, or dealing with some civil rights matters, Conlogue Law LLP stands ready to help. Contact us today to learn more and schedule a free consultation!